Three countries, three very different health systems and a striking convergence of findings. The results of All.Can’s first Action Guide pilots are now available, and together they make a compelling case for measurement-driven reform.

Published by All.Can International | April 2026

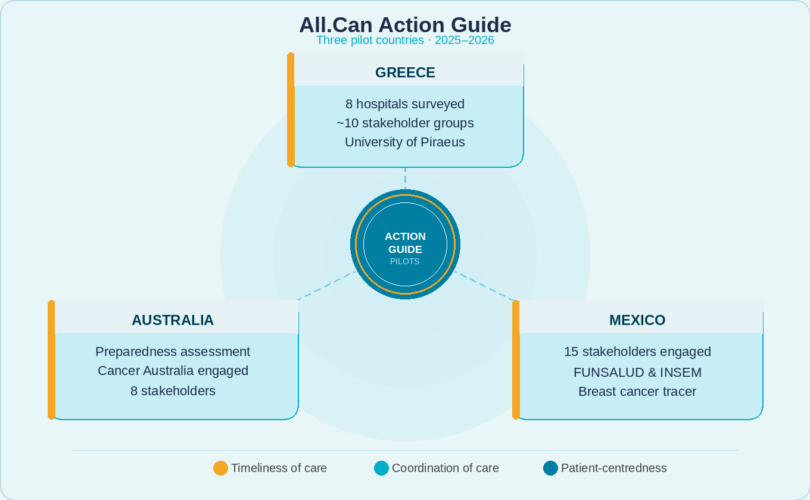

What does it actually take to make cancer care more efficient? Not in theory, but in practice, with real data, real systems and real stakeholders? That is the question All.Can set out to answer when it launched its Action Guide for Efficient Cancer Care. After years of development and a global consultation, 2025 brought the first real test: pilots in Australia, Greece and Mexico.

Those pilots are now complete. The reports are published. And the results, taken together, represent a substantive body of evidence All.Can has generated on what efficient cancer care looks like, and what stands in its way.

Three countries, one framework

The Action Guide assesses cancer system performance across three core dimensions: timeliness of care, coordination of care and patient-centredness. Each pilot applied this shared framework to its own context, drawing on quantitative data, stakeholder interviews and structured multi-sector engagement.

AUSTRALIAStrengths under scrutiny A well-resourced system with strong registries, assessed for its readiness to embed efficiency metrics at scale. Eight stakeholders engaged, including Cancer Australia. |

GREECEA flagship launch Eight hospitals surveyed; around ten stakeholder groups contributed to recommendations, supported by the University of Piraeus and the University of West Attica. |

MEXICOSystem-level diagnostics An ambitious analysis of a complex, fragmented health system, supported by FUNSALUD and Tecnológico de Monterrey. Fifteen stakeholders participated across policy, clinical, academic and patient communities. |

The three countries represent very different health system archetypes – in funding models, institutional capacity, data infrastructure and historical context. That diversity was intentional. If the Action Guide is to be a genuinely universal tool, it must prove itself in varied conditions. It has.

What the pilots found

Despite the differences between them, the three pilots identified a set of recurring challenges that cut across systems. This convergence is one of the most important findings of the whole exercise.

Shared findings across all three countries:

- Data infrastructure gaps: unique patient identifiers, systematic staging data, and interoperable records remain underdeveloped even in relatively well-resourced systems, limiting the ability to track pathways and identify delays early.

- Coordination constraints: multidisciplinary tumour boards exist in each country, but their reach, standardisation, and monitoring vary considerably. Fragmentation across workforce policy, referral pathways and service organisation persists.

- Patient experience not yet systematically measured: patient-reported outcome and experience measures remain pilot-level or research-level in most settings, rather than embedded as standard performance tools.

- Strategic intent ahead of operational execution: national cancer strategies, regulatory frameworks, and financing instruments are often in place, but do not consistently translate into operational tools that reduce delays or improve care coordination.

These are not new problems. But what the pilots provide is structured, comparable, evidence-based documentation of precisely where those problems sit, at what level of the system, and what kinds of intervention are most likely to address them.

Why this matters beyond the three countries

The pilots were always conceived as more than national exercises. They were designed to test whether a shared diagnostic instrument could generate insight that is both locally relevant and internationally comparable. The answer is yes.

For policymakers in countries that have not yet piloted the Action Guide, these reports offer a mirror. The bottlenecks are familiar. The governance challenges are recognisable. The case for investing in data infrastructure, strengthening multidisciplinary care, and embedding patient experience into routine measurement is, if anything, strengthened by seeing it made across three continents simultaneously.

For All.Can National Initiatives considering applying the methodology in their own context, the reports also provide a practical reference point.

What comes next

These reports mark the beginning of a new phase of work, not its conclusion. In 2026, All.Can will expand the programme with further pilots planned in other countries.

Crucially, the Action Guide is also being positioned alongside All.Can’s new report on person-centred cancer care pathways — creating a bridge between normative reform guidance and practical system measurement. In time, the two instruments together will constitute a comprehensive reform and accountability architecture: define what good looks like, then measure how close systems are to achieving it.

We are grateful to the teams and stakeholders in Australia, Greece and Mexico who gave their time, expertise and institutional commitment to making these pilots a reality. Their work has produced something durable and replicable. We encourage the whole All.Can community to read the reports, share them widely, and consider what they mean for your own context.